Problem

The Reaper is terrorizing Starbright city! He’s captured local superhero, Foresight, and trapped them. At the villain’s mercy, Foresight is given a sadistic choice…

The Reaper has taken control of a trolley car, careening down Path A. However, today is the scheduled maintenance day, and five workers are cleaning Path A. They'll be killed unless the trolley's path is changed. However, the only branch is at Path B, which still has one worker on the tracks.

After telling Foresight all this, The Reaper reveals he's left the track path's switch in their holding cell. The choice is up to them: do nothing and watch five innocents die, or flip the switch and directly contribute to one person's death.

The Explanation

On the one hand, murder is wrong. Even if it’s to save others, trading lives isn’t exactly heroic. On the other hand, doesn’t Foresight owe it to the city to minimize suffering? Refusing to act causes more death. There isn't one right answer to this dilemma. Strip away the heroic set-dressing, and you’re left with the classic trolley problem.

Definition of Trolley Problem

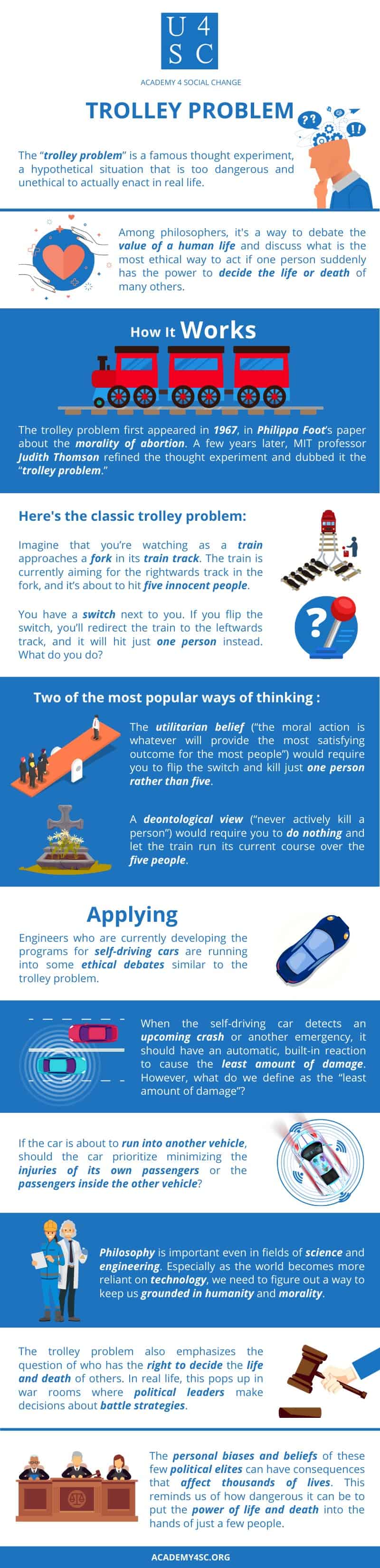

The “trolley problem” is a famous thought experiment used to debate the value of a human life and discuss what is the most ethical way to act if one person suddenly has the power to decide the life or death of many others. The classic trolley problem involves deciding between doing nothing and letting a train kill five people or flipping a switch and redirecting the train to kill one person.

How It Works

A version of the trolley problem first appeared in 1967, in philosopher Philippa Foot’s paper about the morality of abortion. A few years later, MIT professor of philosophy Judith Thomson refined the thought experiment and dubbed it the “trolley problem.”

What you see as the correct decision to make depends on whether your moral code aligns with one of two philosophies: deontological or utilitarian ethics. Deontology states that an act’s morality depends on the act itself. Consequences can’t change the act’s inherent morality. A deontological view would require you to do nothing, and let the train run its current course over the five people. Utilitarianism states that consequences determine an act’s morality and that that the morally correct act is the one that brings the most satisfaction to the greatest number of people. The utilitarian belief would require you to flip the switch and kill just one person rather than five.

So What?

Engineers who are currently developing the programs for self-driving cars are running into some ethical debates similar to the trolley problem. When the self-driving car detects an upcoming crash or another emergency, it should have an automatic, built-in reaction to cause the least amount of damage. However, what do we define as the “least amount of damage”? If the car must choose between crashing into either five pedestrians or one pedestrian, which option should it choose? If the car is about to run into another vehicle, should the car prioritize minimizing the injuries of its own passengers or the passengers inside the other vehicle? As you can see, philosophy is important even in fields of science and engineering. Especially as the world becomes more and more reliant on technology and automated robots, we need to figure out a way to keep us grounded in humanity and morality.The trolley problem also emphasizes the question of who has the right to decide the life and death of others. In real life, the trolley problem pops up in war rooms where political leaders make decisions about battle strategies and where to send their troops. The personal biases and philosophical beliefs of these few political elites can have consequences that affect thousands of lives. Here, the trolley problem reminds us of how dangerous it is to put the power of life and death into the hands of just a few people.